The Token Economy: Why Optimizing AI Usage Is Like Managing a Power Grid

Most token optimization advice tells you to use fewer tokens. That's the wrong problem.

If you're on a fixed-allocation AI plan — Claude Max, ChatGPT Pro, or any subscription with a token budget that resets on a rolling window — unused tokens don't save you money. They evaporate. You already paid for them. The real question isn't "how do I spend less?" but "how do I extract maximum value from a fixed allocation?"

This reframing changes everything. You're no longer doing cloud cost optimization. You're doing capacity planning. And the closest analogue isn't your AWS bill — it's the power grid.

Electrons and Tokens

A power grid operator doesn't try to generate less electricity. They try to ensure every watt generated gets consumed productively. Nuclear plants provide baseload. Solar and wind fill variable demand. Battery storage absorbs surplus and discharges during peaks. Economic dispatch algorithms route power where it's needed, when it's needed, at the lowest marginal cost.

Now map that to AI tokens:

| Power Grid | Token Economy |

|---|---|

| Electricity | Tokens |

| Generator types (nuclear, solar, wind) | Model tiers (Opus, Sonnet, Haiku) |

| Load profiles (residential, industrial) | Project profiles (creative exploration, batch execution) |

| Peak demand forecasting | Interactive session prediction |

| Economic dispatch | Model-aware task routing |

| Battery storage | Token reservation for future sessions |

| Grid frequency regulation | Real-time budget rebalancing |

The parallel isn't just cute — it's structurally precise. Both systems have heterogeneous supply (multiple generation types / model tiers), variable demand (usage patterns that shift by time of day and day of week), rolling capacity windows, and a perishability problem (unused capacity expires).

The Perishability Problem

Here's the math that makes this concrete. Claude Max's 5x plan provides a token budget across two rolling windows: a 5-hour window and a 7-day window. If you're a developer who works intensively for three hours in the evening and barely touches the tool during the day, you're leaving massive capacity on the table during those idle 21 hours.

On a per-token billing plan, idle time costs nothing. On a fixed allocation, idle time is waste — tokens you paid for that generated zero value.

This is identical to airline revenue management. An empty seat on a departed flight is revenue that can never be recovered. Airlines solve this with sophisticated yield management: dynamic pricing, overbooking algorithms, last-minute fare adjustments. The seat has zero marginal cost once the flight is scheduled — the only question is whether someone sits in it.

Your token allocation works the same way. The marginal cost of using an already-allocated token is zero. The only question is whether it does useful work before the window resets.

Two Archetypes, Two Strategies

The optimization strategy depends on how you work.

The After-Hours Creative has a day job and uses AI intensively two or three evenings per week. Their critical metric is availability: "Were tokens available when I sat down to work?" They need guaranteed headroom during peak sessions, which means the optimizer must learn their usage patterns and defend those windows. Autonomous batch work — code analysis, documentation generation, test suites — fills the interstitial hours like water flowing around rocks.

The Full-Time Developer burns tokens steadily throughout working hours. Their optimization lever is model delegation: use Haiku (the fastest, cheapest tier) for execution tasks during the day, reserve Sonnet and Opus for code review, architecture decisions, and complex reasoning. Overnight capacity runs batch jobs. Their critical metric is throughput: "How much more work got done per token dollar?"

Both archetypes share the same underlying problem — a multi-dimensional scheduling optimization across model tiers, time windows, and task priorities. They just weight the constraints differently.

Economic Dispatch for AI

In power systems, economic dispatch is the algorithm that decides which generators to run and at what output level to meet demand at minimum cost. The cheapest generators run first (merit order), and expensive peakers only fire when demand exceeds baseload capacity.

The AI equivalent is a model router that assigns each task to the cheapest model capable of completing it:

Running unit tests? Haiku is sufficient — it's an execution task with clear instructions. Reviewing a pull request for security issues? Sonnet is required — it needs analytical judgment. Designing authentication architecture? Opus is necessary — novel reasoning and cross-domain synthesis.

The router considers task metadata, historical model performance on similar tasks, and remaining per-model budgets. It's bin packing with multiple bin types, where each bin has its own capacity curve and cost profile.

Formally, this is a variant of the job shop scheduling problem with resource constraints. Maximize the total value delivered (priority-weighted task completion) subject to per-model token caps per window, reserved capacity for interactive sessions, task dependency ordering, and model-appropriateness constraints. Minimize tokens expired unused at window boundaries.

This is NP-hard in the general case, but very solvable with heuristics at human scale. You're dealing with dozens of tasks, three model tiers, and two time windows. A greedy algorithm with priority-weighted model-aware bin packing gets 90% of the theoretical optimum.

Demand Forecasting

Power grids forecast demand to pre-position generation capacity. Token systems can do the same by analyzing historical usage patterns.

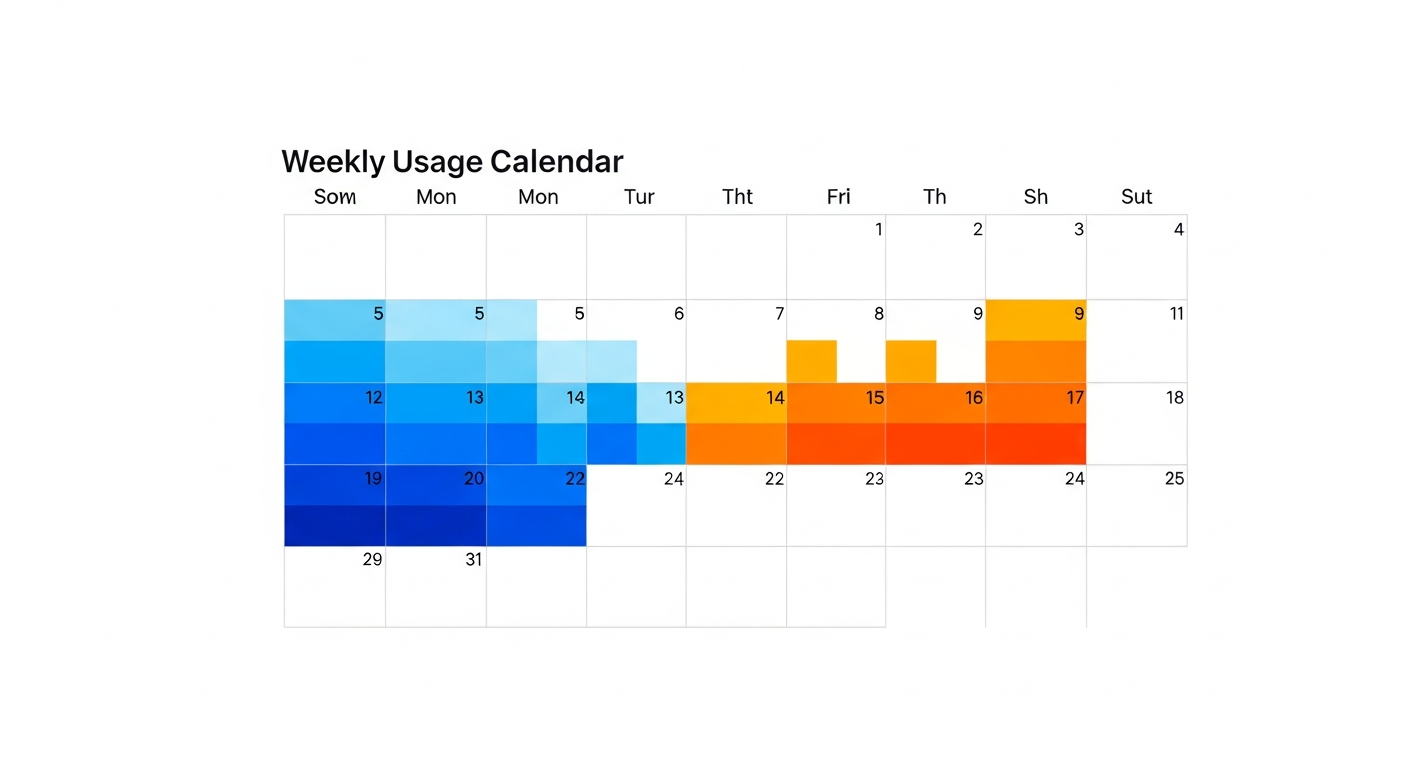

Your JSONL usage history — the raw log of every API call — contains a usage heatmap waiting to be extracted. "You typically burn 60K tokens between 18:00 and 23:00 on Tuesdays and Thursdays." "Weekend usage is light — good window for batch work." "Monday mornings spike, probably standup prep."

This demand forecast drives scheduling decisions. If the system knows you'll want 40K tokens of interactive Opus capacity at 7 PM Thursday, it can schedule Haiku batch jobs throughout the day and hold Opus allocation in reserve. If the reservation window passes unused, tokens release back to the pool for reallocation.

It's the same principle as a hotel's revenue management system: block rooms for expected high-value guests, sell remaining inventory to walk-ins, and release unsold blocks at the last minute to avoid spoilage.

The Kanban Bridge

The most interesting architectural question is where task priority comes from. In a power grid, dispatch priority is driven by economics — cheapest generation first, load shedding as a last resort. In a token economy, priority should come from where you actually track work.

If you use a Kanban board — GitLab issues, Linear tickets, GitHub Projects — board position maps to scheduling urgency. "In Progress" beats "Backlog" automatically. Deadlines create urgency curves that increase priority as they approach. Moving a card on the board adjusts the optimizer's scheduling in real-time.

This creates a feedback loop: your project management tool becomes the control interface for your AI compute allocation. Drag a card to "In Progress" and the optimizer starts allocating tokens to that project's tasks. Move it to "Done" and those tokens free up for the next priority.

Why This Matters Now

The token economy is real and growing. As AI moves from novelty to infrastructure, token allocation becomes a resource management problem comparable to cloud compute, electricity, or bandwidth.

Several observations make this increasingly urgent:

Tokens will get cheaper but probably never free. Compute has real costs — silicon, electricity, cooling, real estate. The trajectory is clear (prices per token have dropped orders of magnitude in two years), but so is the floor. Inference will always cost something.

Fixed-allocation plans create optimization opportunities that per-token billing doesn't. When you pay per token, the optimal strategy is simply "use fewer tokens." When you pay a flat rate for a fixed allocation, the optimal strategy inverts: use every allocated token productively. This is a fundamentally different problem requiring different tools.

Power users are the most constrained. People doing exploratory and creative work need both high-model-tier access (Opus for novel reasoning) and high volume (hundreds of thousands of tokens per week for iterative development). They're simultaneously model-constrained and volume-constrained — the hardest optimization problem.

The productivity gap is purely an optimization problem. The difference between completing three projects per week and five projects per week, on the same subscription, isn't about working harder. It's about whether your tokens do useful work during the hours you're asleep, in meetings, or eating lunch.

The tokens are already paid for. It's just a question of whether they work for you or evaporate.

Building the Foundation

We've started building this. TokenPilot v0.1.0 — an open-source autonomous token budget scheduler — lays the foundation with JSONL usage parsing, a SQLite task queue, headless execution with budget safety controls, and execution history reporting. It watches your token budget, queues work, and executes tasks autonomously when you have capacity to spare.

The next layer adds the intelligence: usage pattern learning, model-aware budget tracking across all three tiers, project profiles with per-model cost estimates, Kanban integration for dynamic priority, a reservation system for protecting interactive sessions, and the economic dispatch engine that routes tasks to the right model at the right time.

The architecture is deliberately modular. The scheduler's decision engine is designed to be extended with new decision factors — each new capability (demand forecasting, model routing, reservation management) plugs into the same evaluation loop without restructuring the core.

The Road Ahead

We're in the early innings of the token economy. Today's fixed-allocation plans are the first generation. Tomorrow's will likely include tiered pricing within allocations, token markets between users, and enterprise-grade capacity planning tools.

The users and teams that develop fluency in token economics now — who learn to think about AI capacity the way grid operators think about electricity — will have a structural advantage. Not because they'll spend less, but because they'll extract more value per dollar of AI compute.

The power grid analogy isn't just a metaphor. It's a roadmap. Every optimization technique that works for electrons will eventually work for tokens. The question is who builds the tools first.

Related Posts

Autonomous Memory Systems: Building MemBrane from Scratch

The holy grail of personal AI is continuity - systems that remember context across sessions, learn from interactions, and improve over time. Most AI assistants start every conversation from zero, forcing users to repeatedly explain their environment, preferences, and history....

Building a Personal AI Infrastructure: Lessons from 2025

2025 marked a transformative year in personal AI infrastructure. What started as experimenting with Claude Code evolved into a comprehensive, production-grade system that fundamentally changed how tec...

The Definitive Guide to MCP Server Configuration in Claude Code

If you've ever felt frustrated trying to configure MCP (Model Context Protocol) servers in Claude Code, you're not alone. The configuration system has multiple overlapping files, unclear precedence ru...

Enjoyed this article?

Subscribe to get notified about new posts on software engineering, AI development, and infrastructure.

No spam, unsubscribe anytime.