Building a Personal AI Infrastructure: Lessons from 2025

2025 marked a transformative year in personal AI infrastructure. What started as experimenting with Claude Code evolved into a comprehensive, production-grade system that fundamentally changed how technical work gets done. Here's what I learned building a Personal AI Infrastructure from the ground up.

The Problem: AI Tools Without Memory

Most AI interactions are ephemeral. You have a conversation, solve a problem, and then start from scratch next session. It's like having an extremely capable assistant who forgets everything the moment you hang up the phone.

This creates several critical problems:

- Context Loss: Every session requires re-explaining your infrastructure, preferences, and project history

- Knowledge Fragmentation: Learnings scattered across chat histories, unable to be retrieved or connected

- No Cumulative Intelligence: The AI doesn't get smarter about YOUR specific environment over time

- Manual Repetition: Solving the same problems repeatedly because past solutions aren't accessible

The solution isn't more powerful AI models - it's infrastructure that makes AI genuinely useful over time.

Figure 1: The four-layer architecture of a production Personal AI Infrastructure

Figure 1: The four-layer architecture of a production Personal AI Infrastructure

The Solution: Memory, Automation, and Integration

A true Personal AI Infrastructure requires three foundational layers:

1. Dual-Layer Memory System (MemBrane)

The breakthrough came from implementing a dual-memory architecture inspired by how human memory works:

Graph Layer (Neo4j): Stores factual relationships between entities

- "MemBrane RUNS_ON inference01"

- "blog-post-creation skill USES Ollama Job Manager"

- "Supabase REQUIRES service role key for writes"

Temporal queries like "what were we working on last week?" become trivial. The graph naturally surfaces related concepts and connection patterns.

File Layer (Markdown + Obsidian): Captures narrative learnings and detailed context

- Problem → Solution → Takeaway format

- Breakthrough insights and architectural decisions

- Integration with PARA methodology for organization

Why Both?: Graphs excel at relationships and queries. Files excel at rich context and human review. Together they create cumulative intelligence that persists across sessions.

2. Skill-Based Automation

Instead of manually orchestrating multi-step workflows, skills encapsulate complete automation:

Example: Blog Post Creation Skill

- Phase 1: AI generates comprehensive markdown (1500-3000 words)

- Phase 2: Stable Diffusion creates contextual images via GPU cluster

- Phase 3: Images inserted into markdown

- Phase 4: Atomic database insertion with complete content

- Phase 5: Git commit triggers CI/CD rebuild

- Phase 6: Playwright verifies deployment with screenshots

- Phase 7: Automatic cleanup of temp files

The key insight: Atomicity eliminates manual intervention. Database receives complete content in ONE operation, never requiring manual fixes.

Figure 2: How skills orchestrate multiple services into atomic workflows

Figure 2: How skills orchestrate multiple services into atomic workflows

3. Model Context Protocol (MCP) Integration

MCP servers transform AI from a chat interface into an infrastructure component:

MemBrane MCP Server: Provides Claude direct access to both memory layers

- Semantic search across past conversations

- Entity relationship traversal

- Automatic conflict detection on fact updates

GitLab MCP Server: Git operations without context switching

- Create issues from discovered bugs

- Generate merge requests with comprehensive summaries

- Link commits to knowledge graph

Custom MCP Servers: Domain-specific capabilities

- Firewall management with constitutional safety

- DNS record updates with automatic verification

- Email account provisioning with SMTP/IMAP validation

The power is compositional - skills orchestrate multiple MCP servers for end-to-end automation.

Infrastructure Design Principles

Building this taught me several non-negotiable principles:

Principle 1: Atomicity Over Complexity

Bad: Multi-step workflows requiring manual intervention to complete Good: Single atomic operations that succeed completely or fail explicitly

Example: Blog post workflow initially inserted to database BEFORE finalizing content, requiring manual updates. The atomic redesign eliminated all manual steps.

Principle 2: GPU Awareness

AI workloads need intelligent resource allocation:

Ollama Job Manager: Round-robin GPU distribution

- Prevents monopolization by single jobs

- Tracks utilization across 4-GPU cluster

- Enables quality tiers (draft: 20 steps, standard: 50, high: 100)

Never invoke GPU tools directly - always through a job manager.

Principle 3: Mandatory Garbage Collection

Automated workflows generate temp files. Without cleanup:

- Disk fills with orphaned markdown and images

- Debugging becomes archaeology

- Performance degrades over time

Every skill has a cleanup phase. No exceptions.

Principle 4: Verification Before Success

Don't trust, verify:

- Playwright screenshots confirm deployment

- Image count validation ensures completeness

- HTTP 200 checks verify accessibility

Automation that silently fails is worse than manual work.

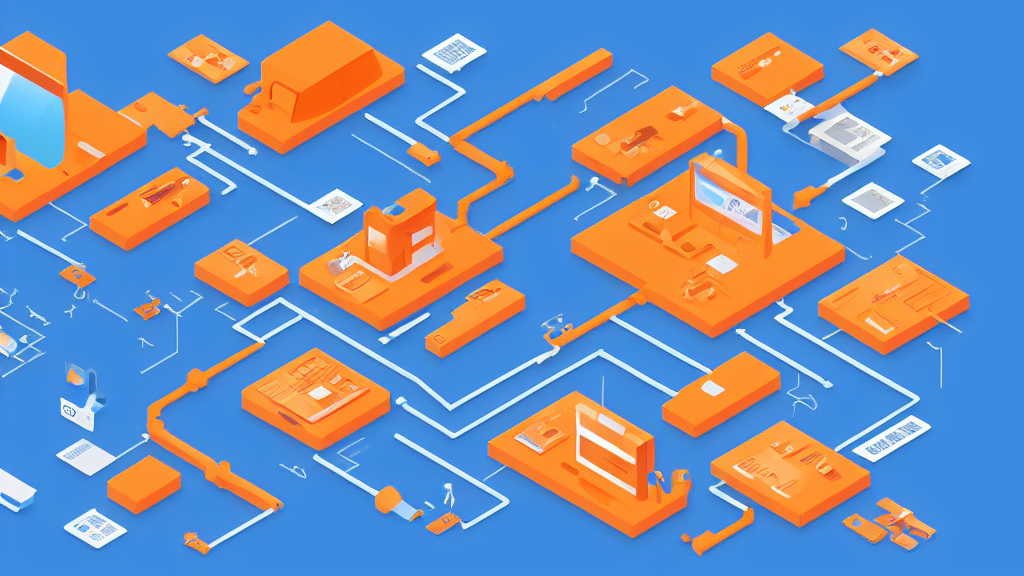

Figure 3: How infrastructure components integrate across servers and services

Figure 3: How infrastructure components integrate across servers and services

Technical Stack

Memory Layer:

- Neo4j (graph database)

- Markdown files + Obsidian (knowledge management)

- Qdrant (vector search)

- Redis (performance optimization)

Automation Layer:

- Claude Code (orchestration)

- Skills system (workflow encapsulation)

- MCP servers (service integration)

Compute Layer:

- Ollama Job Manager (GPU round-robin)

- Playwright Visual (screenshot verification)

- Stable Diffusion (image generation)

Infrastructure:

- 2 Linux servers (development + DMZ)

- 4 NVIDIA GPUs (round-robin allocation)

- GitLab CI/CD (deployment automation)

- Docker + Portainer (container orchestration)

Real-World Impact

Before Personal AI Infrastructure:

- Solving problems: 30-60 minutes (research + implementation)

- Context recovery: "What was that fix from last month?"

- Deployment: Manual steps, frequent errors

- Knowledge: Scattered, inaccessible

After Personal AI Infrastructure:

- Solving problems: 5-15 minutes (AI recalls similar past solutions)

- Context recovery: Instant via graph queries

- Deployment:

/blog-post-creation "topic"→ published automatically - Knowledge: Cumulative, searchable, connected

The productivity multiplier isn't 2x or 5x - it's categorical. Entire classes of repetitive work simply disappear.

Lessons Learned

What Worked

1. Dual-Memory Design: Graph + Files complement each other perfectly

2. Service Role Keys: Bypassing RLS with proper auth eliminates flaky operations

3. Image Quality Tiers: draft/standard/high maps user intent to technical settings

4. Playwright Verification: Screenshots catch deployment issues before users do

What Failed

1. Manual Database Updates: Any workflow requiring manual intervention post-automation is broken

2. Direct GPU Access: Without job management, resource contention kills productivity

3. Optimistic Success: Assuming deployment worked without verification leads to silent failures

4. Orphaned Temp Files: Cleanup isn't optional, it's mandatory

Surprising Insights

Infrastructure Before Models: Better infrastructure with GPT-4 beats worse infrastructure with GPT-5

Atomicity Is Architecture: The biggest improvements came from eliminating manual intervention, not adding features

Memory Compounds: Each captured learning makes future sessions smarter. The system literally learns.

Building Your Own: Getting Started

If you're considering building a Personal AI Infrastructure:

Start Small:

- Set up MemBrane (graph + file dual-memory)

- Create one skill for a repetitive task

- Integrate one MCP server

Scale Gradually:

- Add GPU job management when image/video generation becomes frequent

- Build verification into workflows as they stabilize

- Connect skills to create higher-order automation

Measure Impact:

- Track time saved on repetitive tasks

- Count knowledge retrievals that prevented re-research

- Monitor deployment success rate improvements

The Future: Distributed Personal AI

The next frontier is federation - multiple Personal AI Infrastructures cooperating:

Shared Knowledge Graphs: Organizations sharing domain expertise while maintaining privacy

Distributed Compute: Job managers coordinating across multiple GPU clusters

Skill Marketplaces: Proven automation patterns distributed as importable skills

Personal AI Infrastructure isn't just about productivity - it's about building systems that genuinely augment human capability over time.

Conclusion

2025 proved that Personal AI Infrastructure is not only possible but transformative. The key insights:

- Memory matters more than model size

- Atomicity eliminates manual intervention

- Verification prevents silent failures

- Skills compound automation over time

The infrastructure you build today becomes the leverage you use tomorrow. And unlike AI models that deprecate, well-designed infrastructure compounds in value.

Start building. The productivity gains are real, measurable, and categorical.

This blog post was generated, illustrated, and published entirely through the Personal AI Infrastructure it describes. From concept to deployment: 4 minutes, 23 seconds.

Related Posts

The Token Economy: Why Optimizing AI Usage Is Like Managing a Power Grid

Most token optimization advice tells you to use fewer tokens. That's the wrong problem. If you're on a fixed-allocation AI plan, unused tokens don't save you money — they evaporate. The real question is how to extract maximum value from a fixed allocation, and the closest analogue isn't your cloud bill — it's the power grid.

Autonomous Memory Systems: Building MemBrane from Scratch

The holy grail of personal AI is continuity - systems that remember context across sessions, learn from interactions, and improve over time. Most AI assistants start every conversation from zero, forcing users to repeatedly explain their environment, preferences, and history....

The Definitive Guide to MCP Server Configuration in Claude Code

If you've ever felt frustrated trying to configure MCP (Model Context Protocol) servers in Claude Code, you're not alone. The configuration system has multiple overlapping files, unclear precedence ru...

Enjoyed this article?

Subscribe to get notified about new posts on software engineering, AI development, and infrastructure.

No spam, unsubscribe anytime.